By Carl Weiss

|

| Image courtesy of setupsandspaces.com |

At Apple Computer, Steve Jobs

is gone but not forgotten. While it

would be hard to forget their iconic cofounder, even 3 years after his death,

it is as though Steve had just stepped out for lunch. That’s due in part to the fact that Jobs had

his hand in so much of what we consider to be high tech today. He was one of the architects in all kinds of technology,

from the personal computer, to the tablet and smartphone. If you buy music online, iTunes pioneered the

way that the industry sells digital music.

If you enjoy animated motion pictures, let’s not forget that Steve was

the knight in shining armor who came to the rescue of Pixar when even the likes

of George Lucas was unable to afford to keep it afloat in the early days of

digital cinema.

Of course, there is one other

reason why anyone who visits Apple Computer corporate headquarters at 1

Infinite Loop, Cupertino, California would have the impression that Steve is due back at any moment. That’s

due to the fact that his office has remained virtually untouched since

his departure. His nameplate still graces

the door. When asked why during a March

18, Washington Post interview, Apple CEO Tim Cook responded,

“I haven’t decided about what we’ll do there. But

I wanted to keep his office exactly like it was. What we’ll do over time, I

don’t know. I didn’t want to move in there. I think he’s an irreplaceable

person and so it didn’t feel right . . . for anything to go on in that office.

So his computer is still in there as it was, his desk is still in there as it

was, he’s got a bunch of books in there. His name should still be on the door.

That’s just the way it should be. That’s what felt right to me.”

That could change in a year,

when Apple’s new flying saucer-shaped headquarters is completed. But what will not change is the large

footprint and lasting legacy of one of the titans of microcomputing. In their just released book, “Becoming Steve

Jobs: The Evolution of a Reckless Upstart into a Visionary Leader,"

authors Brent Schlender and Rick Tetzeli

document Jobs’ triumphs and travails. While a visionary, Steve had what

amounted to blinders on in a number of circumstances that cost him big.

|

| Image courtesy of fastcodesign.com |

One of the first was a

unilateral decision he made in 1984 to air an Orwellian 60-second spot during

the Super Bowl without consulting the board until the day before it was

scheduled to air. According to the book,

the board was so horrified that they sold one of their spots so that the ad

only appeared once during the game.

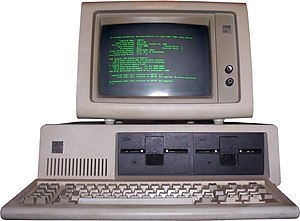

Shortly after that, Steve

decided it was time to reinvent the personal computer, the market for which was

becoming glutted since the introduction of the IBM PC and its many clones. Taking

$50 million of the company’s money, Steve assembled a team of the best and

brightest at Apple and created what he thought would be the next leap forward

in personal computing technology. Called Lisa, the computer was released

in January of 1984 priced between

$3,495 and $5,495. Even though the system was well ahead of its time,

commercially its launch was hailed as a failure, one that would ultimately cost

Jobs his job.

|

| Courtesy of en.wikipedia.org |

This

failure did not deter Jobs, who along with several other ousted Apple employees

went onto start NeXT Computer, Inc. in 1995. While NeXT only sold around

50,000 units and was ultimately absorbed by Apple for $429 million, several of

the concepts developed at NeXT were incorporated into later Apple systems,

including parts of the OS X and IOS operating systems. During his hiatus

from Apple, Steve Jobs also dabbled with another company called Pixar, in which

even George Lucas had lost faith. Pixar would later go onto produce a

number of animated features some of which would receive Academy awards.

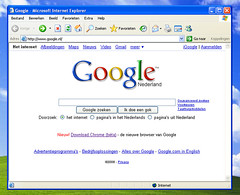

Jobs also clearly had a bead on the NeXT big trend of the 1990’s which he

referred to as interpersonal computing that would soon appear with an eerily

similar moniker: The Internet.

“While Steve Jobs returned to Apple, after

running another computer company he started called NeXT, a man named Gil Amelio

was the CEO of Apple. The company was a disaster at this point, and Jobs didn't

think very highly of him — in fact, he thought he was a bozo.

To signal his displeasure, Jobs dumped

all but one of the shares he had gotten for selling NeXT to Apple without

telling anyone. He had one share, so he was still able to attend Apple's annual

meeting, "but the sale was a

high-decibel vote of no confidence," write the authors. "Amelio felt

stabbed in the back, as he was."

More importantly, immediately upon his

return as CEO, Steve’s first job was to replace nearly everyone on Apple’s

board. You have to remember that Steve

was absent from Apple for eleven years, during which time the company had

floundered. Within two years Jobs had

brought Apple back from the brink of near bankruptcy. In 1998, Steve started debuting a number of

revolutionary new products, including the

iMac, iPod, iPhone, and iPad. He also

initiated the service side of the business by opening a chain of Apple Retail

Stores and two new etailers, iTunes Store and the App Store .

As a result, by 2011 Apple became the

world’s most valuable publicly traded companies.

Unfortunately, that was also the last year of Steve

Jobs life. That doesn’t mean he waited

until the last minute to make sure that his legacy was preserved.

"Steve cared deeply

about the why," current Apple CEO Tim Cook told authors Brent Schlender

and Rick Tetzelii. "The why of the decision. In the younger days I would

see him just do something. But as the days went on he would spend more time

with me and with other people explaining why he thought or did something, or

why he looked at something in a certain way. This was why he came up with Apple

U., so we could train and educate the next generation of leaders by teaching

them all we had been through, and how we had made the terrible decisions we

made and also how we made the really good ones.

Apple's senior vice

president of Internet Software and Services, Eddy Cue, noted that Jobs was

"working his ass off till the end, in pain," using morphine to remain

functional. In his final years Jobs began accelerating preparations to leave the

company in a good shape, including founding Apple University, but also talking

with Cook about what would happen after his death.

"He didn't want us asking, 'What would Steve do?' He abhorred the way the Disney culture stagnated after Walt Disney's death, and he was determined for that not to happen at Apple," according to Cook."

"He didn't want us asking, 'What would Steve do?' He abhorred the way the Disney culture stagnated after Walt Disney's death, and he was determined for that not to happen at Apple," according to Cook."

Summoning Tim Cook to his home on August

11, 2011, Steve passed him the torch by naming Tim as his successor. But even that meeting demonstrated Jobs

unwillingness to give up the ghost.

“Cook remarked to the

biography's authors. "I thought

then that he thought he was going to live a lot longer when he said this,

because we got into a whole level of discussion about what would it mean for me

to be CEO with him as a chairman. I asked him, 'What do you really not want to

do that you're doing?'"

While his passing did have a short term negative impact on Apple’s stock price that briefly fell 5%,

the

company Steve founded is today stronger than ever. In March 2011, Fortune

Magazine named Steve Jobs the “greatest entrepreneur of our time.” Other posthumous honors included the Grammy

Trustees Award, inducted as a Disney Legend, along with a bronze statue in

Budapest commissioned by the Graphisoft company and a memorial that was erected

in 2013 in St. Petersburg, Russia.

While his passing did have a short term negative impact on Apple’s stock price that briefly fell 5%,

|

| Courtesy of extremetech.com |

Suffice it to say while the corporeal form

of Steve Jobs will only be with us via YouTube and previously televised interviews,

his undying spirit and lifelong list of

technological accomplishments will continue to

haunt the industry that he helped spawn.